in UI/UX design

Agentic AI services

You built the agent. We design the experience around it. Agents that plan and act on a user's behalf turn the product into a decision-and-action surface. We build the trust surfaces, oversight patterns, and recovery flows so your agents reach production and stay there.

- From clever demo to product

The agent demo wowing investors three months ago needs to behave like a product before the next round. Founders racing toward Series A get planning visibility, tool-use transparency, and human-in-the-loop checkpoints designed so the demo and the production product feel like the same thing. - Adoption that survives QBR scrutiny

Heads of AI carry adoption into every Quarterly Business Review. Agentic features fail QBR scrutiny when users don't trust them enough to give up the manual workflow. We design approval gates and visibility into agent reasoning so usage numbers reflect real value. - Agent patterns your system can extend

Most design systems were built for forms, tables, and dashboards — not AI agents that plan tasks or work across multiple steps. We expand your existing design system with AI-specific patterns your team needs to design and launch agent experiences directly inside Figma.

for our clients

our excellence

of experience

& Recognition

Our team’s work was honored with most of the world-known trophies

Where agentic AI breaks in the product

Most agentic AI products land in the gap between "the model can do this" and "users will let it." Founders see the disconnect during investor demos. Heads of AI see it in dashboard adoption numbers flatlining three months after launch. Design leads see it in their team layering autonomy patterns on top of a UI never built for them. The work below is what we hear most often when we walk through agentic AI products with senior product leadership.

The demo flies, the pilot stalls

Investors saw an agent handling a multi-step task in 90 seconds. Three months into the pilot, enterprise users still won't let the agent act without watching it. The demo won the room; the product can't keep it. Founders feel this gap right around the post-Seed milestone review, when usage data starts contradicting the pitch.

Agentic features no one adopts

The release notes say "new AI agent." The dashboard says 4% weekly active. Heads of AI take that data into QBRs every quarter, and every quarter the C-suite asks the same question: why aren't we seeing the ROI we sold the board on? Adoption is the metric. Trust is the blocker.

Design system without agent patterns

Your system handles inputs, tables, and modals. It lacks a vocabulary for plan previews, tool-call confirmations, autonomy levels, and partial-recovery states. Design leads see the gap first, then watch every agent feature get designed twice — once by someone unfamiliar with the system, once by them.

Failure modes hidden until production

Agents handle the success path well. The hard work shows up in tool failures, ambiguous user intent, timeouts mid-plan, partial results, and permission denials. Teams discover these states after launch, when the agent quietly stops working for the user. The UI has nowhere to put the truth.

How we design agents that reach production

We've designed 30+ AI products since 2017. The work below is the playbook. We don't run experiments on your product. We bring the proven patterns and the trust surfaces your engineering team can implement against eval harnesses already in place.

Map autonomy levels to user trust

Agents don't operate at one autonomy level. We design the dial with read-only suggestions, assisted actions requiring approval, supervised execution, and full autonomy. The UI moves between modes based on task risk and audit requirements. Trust grows when users control where they sit.

Design the failure-mode UI first

The agent succeeds in the demo. In the product, it times out, picks the wrong tool, hits rate limits, partially completes, or asks for permissions it doesn't have. We design these states with the same rigor as success cases. The product feels honest, and honesty is what builds trust.

Build trust surfaces into every action

Before the agent acts, the user sees the plan. After it acts, the user sees what happened, why, and how to reverse it. Plan previews, citation, edit, regenerate, audit logs, and one-click reversal sit on every agent surface. Enterprise procurement reviews stop being a hurdle and start being a checklist.

Extend your design system, don't replace it

We start from your tokens, components, and breakpoints. We extend the system with agent-specific patterns your team picks up and reuses: plan cards, approval gates, autonomy toggles, agent-state indicators, and partial-result containers. Your design lead keeps the system intact. Your team gains a pattern library for AI behavior.

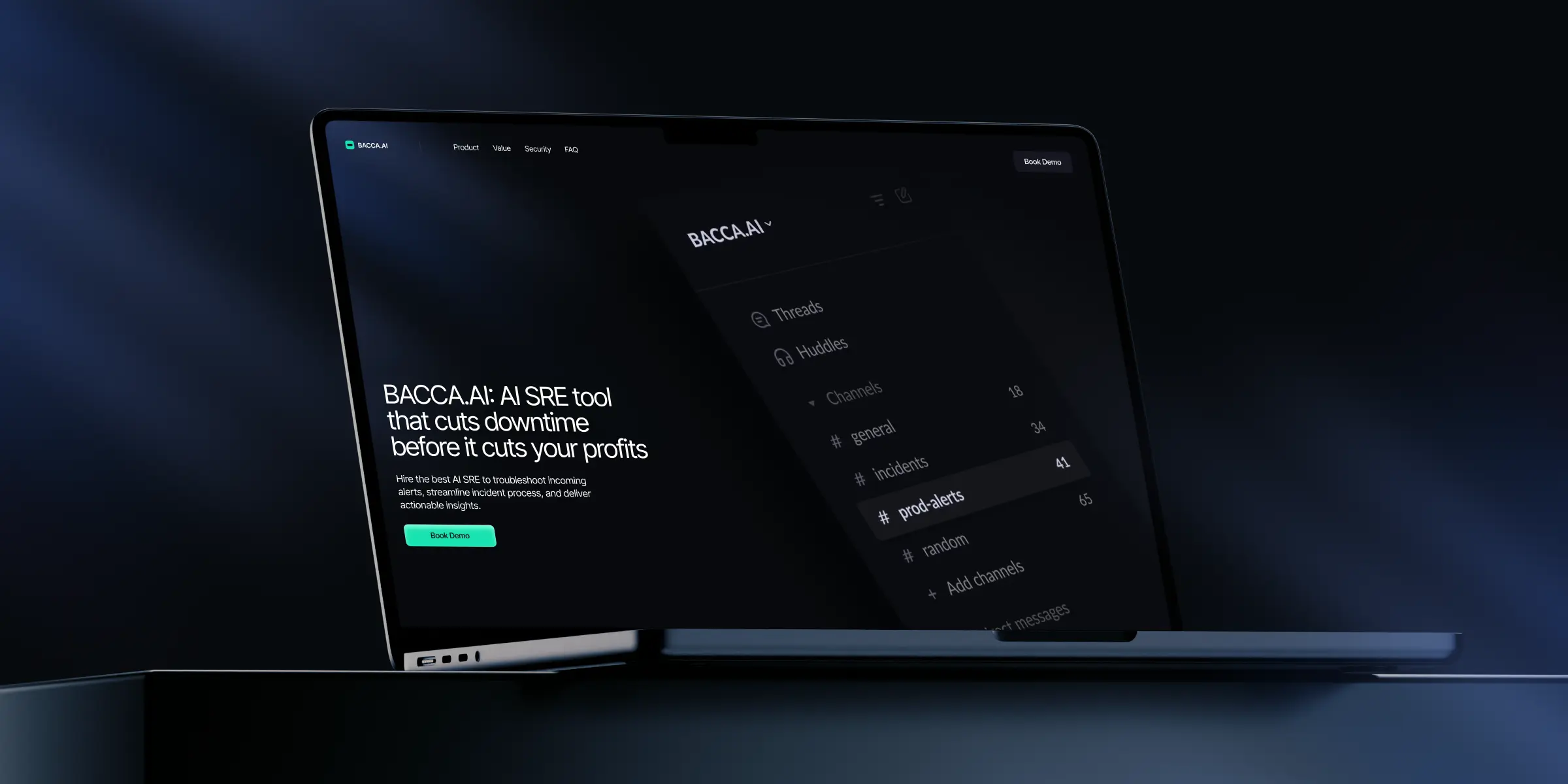

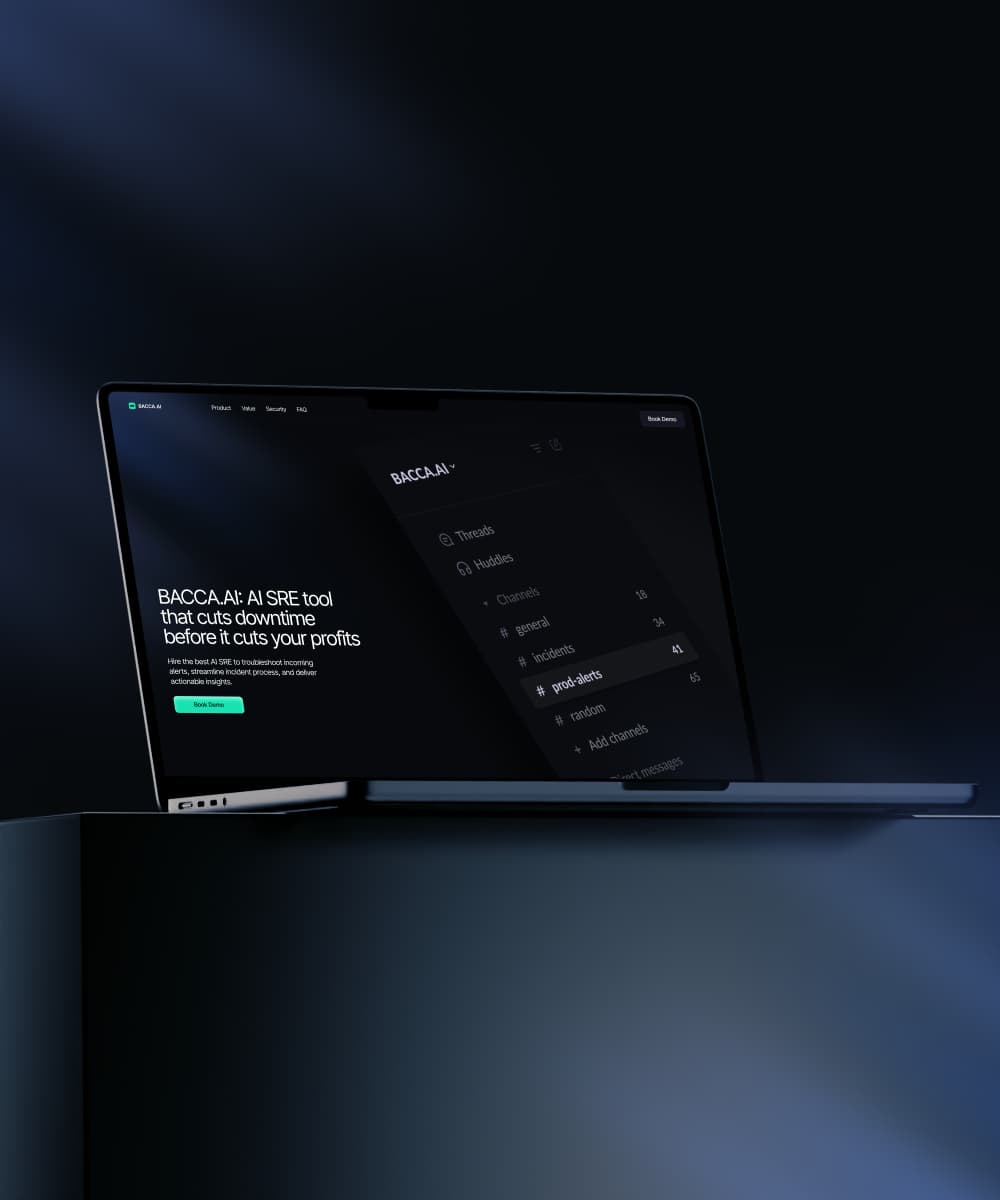

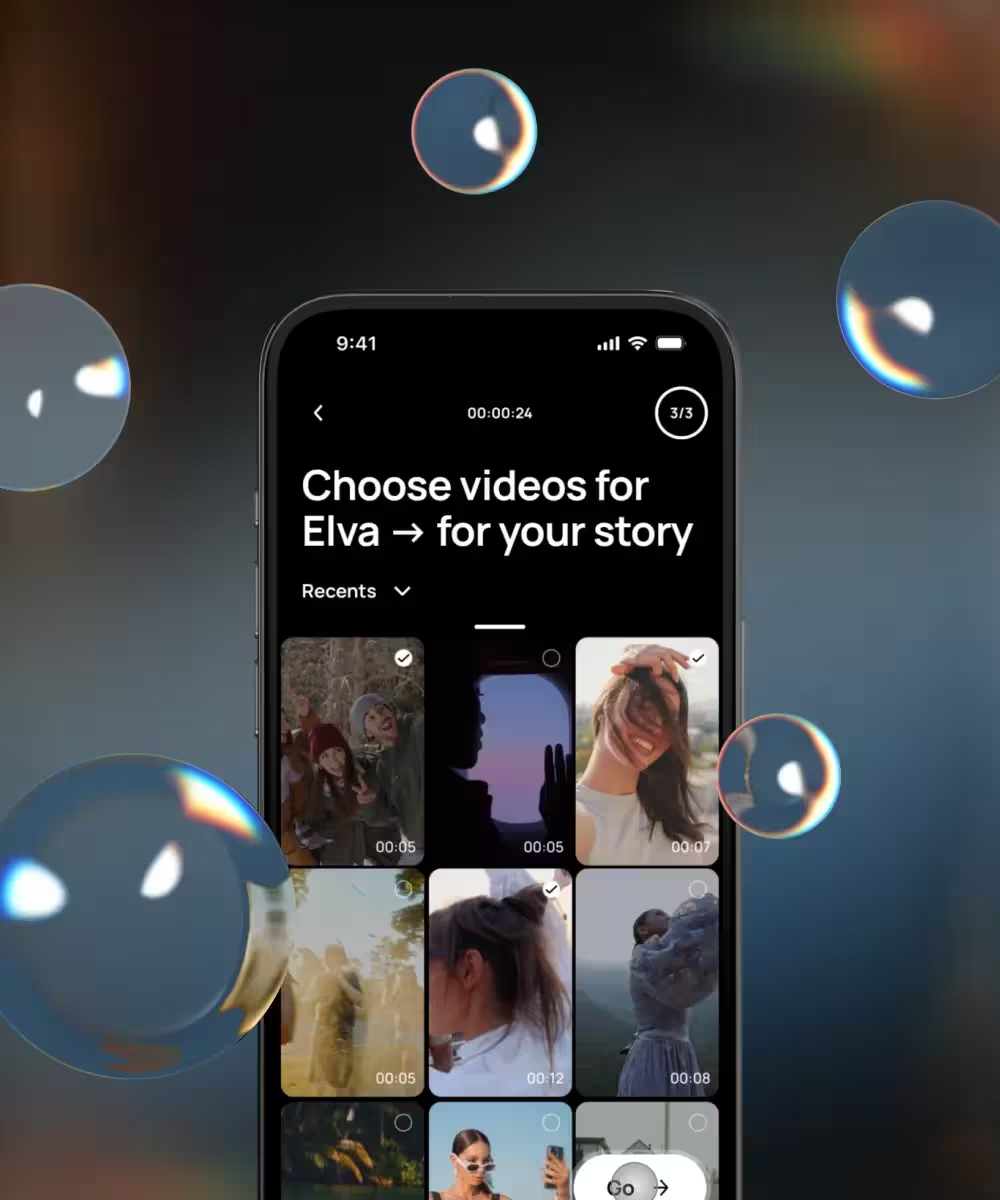

Agentic AI we've taken to production

Across 30+ AI products since 2017, we've designed agents for sales workflows, customer service, internal operations, regulated industries, and developer tools. Each engagement starts with the same question: what does the agent need to do, and what does the user need to see before they let it. The cases below are the answers, and the trust patterns inside them transfer across product categories.

What clients say about our agent work

Teams come to Lazarev.agency for agentic AI services when the gap between demo and product becomes the only thing standing between this round and the next, when adoption flatlines three months after launch, or when an internal design team needs senior AI UX support without losing control of the system. The feedback below proves the benefits of our cooperation.

Lazarev.agency is a top tier design firm with focused execution and a team that's genuinely collaborative and iterative. We couldn't have asked for a smoother experience.

We've been working with Lazarev. since the very series seed to series B stages of our product. It's amazing how they always research and find ideas that exceed our own initial envision!

Industries where our agent work has landed

We've designed agentic AI for sales platforms, customer service, healthcare workflows, financial services, legal operations, developer tools, and enterprise productivity. The patterns transfer across industries. The regulatory and trust requirements vary, whereas the underlying UX rigor doesn't.

Who we are and why teams like yours work with us

We exist for B2B teams under pressure to turn an AI roadmap into visible product usage, expansion, and a safer story in front of the C‑suite and investors. If design isn’t moving revenue, adoption, or retention, it’s decoration. We design to avoid that. Since 2015, we’ve shipped 600+ products and earned 120+ awards for work on complex, data-heavy tools: fintech platforms, AI copilots, decision engines, and vertical SaaS. Our work has helped clients turn “we have AI features” into “our customers actually use and pay for them.”

We started designing AI products in 2017, long before “AI-native” became a buzzword. With 30+ AI products shipped, we focus on the hard part most teams struggle with: making complex intelligence feel simple, trustworthy, and obviously valuable in a demo, a POC, or a QBR. We’re a 40+ person team of UX strategists, product designers, and analysts who treat design as a business function. Every engagement is anchored to the metrics you care about: AI feature adoption, activation and retention in key accounts, time-to-decision in core workflows, and upgrade/expansion tied to AI-powered plans.

in UI/UX design

industry awards

successfully completed

We operate on a simple principle: if you're not measuring design against business outcomes, you're wasting money.

What sets us apart from a typical agency or a single in-house hire is pattern recognition at scale. We’ve seen what works – and what quietly kills adoption – across hundreds of AI and data-heavy products. That lets us spot failure modes early, bring proven interaction patterns to your team, and reduce the risk that your next AI release is another unused toggle in a settings menu.

We start with research not because it’s “best practice,” but because designing without understanding your users, your market, and your revenue model is just guessing with nicer pixels. From there, we collaborate with your product, AI, and design leaders to define where AI should show up, how it should behave, and how to make it obvious, safe, and monetizable.

If you’re a Head of AI, Product, or an AI-native founder who needs AI capabilities to be seen, understood, and used now, not someday, we’re built to be that partner.

How an engagement runs from week one

Engagements start with a structured intake and an audit of your current agent surfaces, or your concept if you're pre-launch. We scope the work after the audit. Founder engagements run 4–8 months. Enterprise programs run 4–6+ months. Same template, different cadence.

You don't need more models. You need them to behave like a product.

Structured intake and stakeholder alignment

Week one is interviews, artifact review, and stakeholder alignment. The conversation crosses product, design, and engineering leadership, depending on the org. The goal is a written brief everyone agrees with before design starts. Misalignment after week one costs more than misalignment before.

AI UX audit of current surfaces

We audit your existing agent surfaces against the failure-mode patterns we've built across 30+ AI products. Output is a prioritized list of trust gaps and pattern debts. Mature products use this as a redesign roadmap. Pre-launch products use it to define the product before code commits.

Pattern design and system extension

We design the agent-specific patterns the product needs, working inside your design system or building one if needed. Plan cards, approval gates, autonomy toggles, audit views, partial-result containers, and recovery flows. Each pattern documented for engineering, build-ready by handoff, and tested against your eval harness inputs.

Prototype, test, and iterate with users

We prototype the highest-risk flows and test with synthetic users and real users from your customer base. Findings feed back into the patterns and into your team's eval harness. Two to three iteration cycles bring the trust surfaces to a level enterprise procurement and end users both accept.

Final handoff and adoption support

Final handoff includes documented patterns, motion specs, eval-harness inputs, and a 30-day adoption window where our team supports your engineers and design team through implementation questions. The agent reaches production. Your design system gains a permanent AI vocabulary. Your team owns it after we step out.

Share your project idea with us!

FAQ

How fast can we go from kickoff to a production-ready agent?

For founder engagements with a focused agentic AI product, 4–8 months from kickoff to a production-ready surface and a launch-ready marketing story is the typical range. The variables are the number of agent surfaces, the depth of failure-mode coverage required, and whether brand, website, and demo are in scope.

For enterprise programs adding agentic capabilities to an existing platform, 4–6+ months is the typical range. The scoping conversation after intake confirms the cadence either way.

How does this work alongside our internal design team?

We work as a senior external pod under your design lead. We start from your tokens, components, and breakpoints. We extend the system with AI-specific patterns your team picks up and reuses. The internal team keeps ownership of the system.

Heads of AI who've worked with us tell us the trade-off they expected, losing control of the system to move faster, didn't happen. The system stayed intact. The patterns are theirs now.

What does this cost?

Pricing depends on the complexity of the agentic surfaces, the scope of work, pod size, and timeline. Founder engagements covering product, brand, demo, and motion sit at one end of the range. Enterprise programs adding agentic capabilities across a mature platform sit at the other.

A concrete estimate comes after the structured intake and audit phase, once the scope is defined together.

What's different about agentic AI UX compared to standard AI features?

A chatbot answers. An agent acts. The UX work has to cover planning visibility, approval gates, autonomy controls, audit trails, partial-result handling, and reversal. Each of those is a state a standard AI feature doesn't need. Skipping any of them is how agents end up impressive in demos and absent from production.

The shift from question-and-answer to decision-and-action is the whole reason agentic AI services exist as a discipline.

Why Lazarev.agency over an in-house build or another agency?

We've designed 30+ AI products in production since 2017, including agentic systems across sales, customer service, and regulated workflows. In-house teams often lack the AI UX pattern depth, since most haven't designed an agent before. Other agencies often lack the AI-native fluency to design failure-mode UI as carefully as success states.

We bring both, with a response within one business day to scoping conversations.