Before the AI flame was ever lit, the workforce was overloaded with managing repetitive tasks and unstructured workflows, wasting precious creative energy on stuff better delegated to autonomous management.

Generative AI stepped in to tackle that gap. By offloading bureaucratic and largely mundane workload to smart agents, it freed human talent to focus on creativity and domain expertise. For a moment, it felt like a panacea.

A few years in, the cracks are visible.

Industries differ. Even within the same niche, user demands can sit at opposite ends of the spectrum. Having a unidimensional agent (no matter how capable) run the hardcore operations doesn’t hit the spot that much anymore.

So are we stuck? Not really. We just seem to be onto something new. One direction we might be heading is toward user generated use case framework.

In this blog, we discuss the current state of gen AI, how it benefits the economy and businesses across industries, and the ultimate conceptual shift that can revamp workflow optimization.

Key takeaways

- Gen AI has outgrown industry templates. Treating generative AI as industry-specific creates scale, precision, and context problems that compound as usage grows.

- Prompts are not a strategy. Reducing user diversity to prompt variations leads to overgeneralized output and context decay across workflows.

- User-generated use cases framework introduced by Lazarev.agency fuels the power of compounding intelligence. When systems learn from real use cases, they evolve from reactive tools into adaptive systems.

- The future of gen AI is structural. Competitive advantage will come from smarter frameworks around AI.

Where does generative AI stand today?

“People are using [AI] to create amazing things. If we could see what each of us can do 10 or 20 years in the future, it would astonish us today.” — Sam Altman, cofounder and CEO of OpenAI

That future Altman points to isn’t distant.

Gen AI has already moved past the experimentation phase. Conversational UI and AI agents change how businesses operate.

Embedded in daily work, they reshape how teams write, research, design, code, decide, and do everything in between.

What’s striking, though, is not the speed of adoption, but the growing mismatch between how widely gen AI is used and how poorly it’s integrated.

The current state of gen AI is defined by momentum, underutilization, and a clear signal: while the technology sprints ahead, organizational thinking jogs behind.

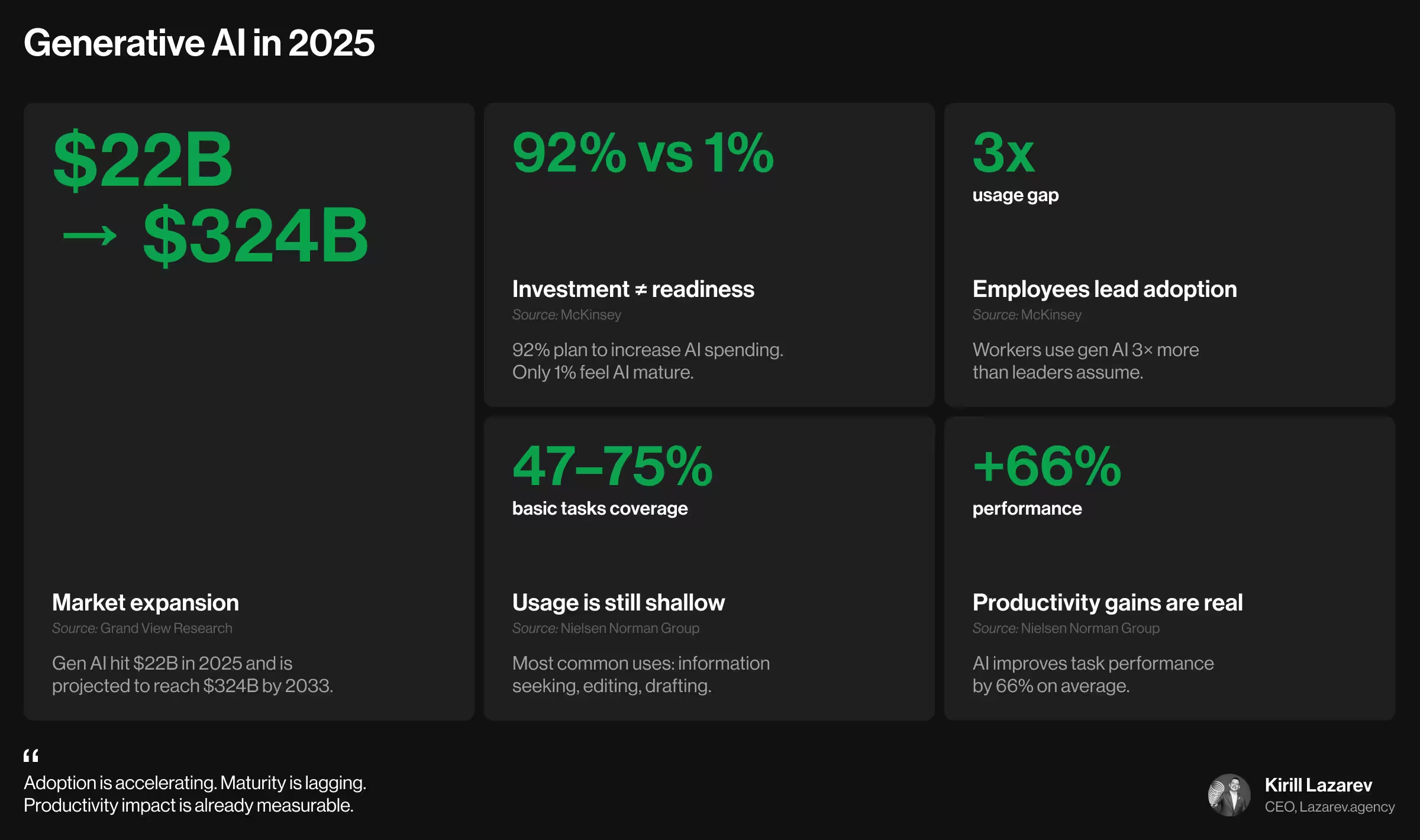

Mere words? Say no more. Here’s what the data tells us about where gen AI stands right now:

- Market momentum is explosive. According to Grand View Research, the global generative AI market reached over $22 billion in 2025 and is projected to surpass $324 billion by 2033.

- Everyone is investing. Almost no one is ready. McKinsey reports that 92% of companies plan to increase AI investment over the next three years. Yet only 1% consider themselves AI mature.

- Employees are ahead of leadership. McKinsey research highlights that workers are using gen AI 3 times more than leaders assume. Adoption is bottom-up, improvised, and largely invisible to management.

- Most gen AI usage is painfully basic. According to Nielsen Norman Group (NNG), the dominant use cases today are information seeking (57–75%), editing (52%), and drafting content (47%). Far fewer people use AI for deeper work like analysis, coding support, ideation, or multimedia creation. Why? Most tools are still generic, context-blind, and poorly aligned with real jobs.

- Yet, productivity gains are already substantial. Across NNG studies, using gen AI improves task performance by 66% on average. The biggest gains show up in complex tasks and among less-experienced workers.

Let’s pause on that last number for a moment.

If generic, one-size-fits-all gen AI tools already deliver a 66% performance lift, imagine the gains once generative AI truly fits user needs as a glove.

Once it understands domain context, adapts to skill levels, and integrates smoothly into practical workflows, the ceiling moves dramatically higher. There’s enormous potential locked inside this gap.

Now is the time to recognize and leverage it.

What’s wrong with the current conception of gen AI?

Across industries, gen AI is understood as an algorithm-based tool that generates text, images, code, or video on demand.

That framing isn’t wrong. But it’s also where our collective understanding starts to wobble. Because it focuses on what gen AI produces, not how it adapts or (as it often happens) misfires once human users enter the system.

To see this clearly, it helps to learn from the giants shaping the narrative.

- McKinsey & Company: “Generative artificial intelligence (AI) describes algorithms (such as ChatGPT) that can be used to create new content, including audio, code, images, text, simulations, and videos.”

- IBM: “Generative AI, sometimes called gen AI, is artificial intelligence (AI) that can create original content such as text, images, video, audio, or software code in response to a user’s prompt or request.”

- NVIDIA: “Generative AI models use neural networks to identify the patterns and structures within existing data to generate new and original content. One of the breakthroughs with generative AI models is the ability to leverage different learning approaches, including unsupervised or semi-supervised learning for training.”

- Microsoft: “While conventional AI typically analyzes data to find patterns, generative AI works differently — it creates new data… Instead of following set rules, generative AI studies the basic structure of training data and uses advanced machine learning to generate new content. This lets it make new outputs that match what it's learned.”

What can we learn from these definitions? The structural paradigm upon which AI systems are built. And it looks like this:

- Pattern extraction from large data sets

- Probabilistic generation

- Training on vast, generalized datasets

- Prompt-driven interaction as the control surface

- Generalization across domains, formats, and tasks

This architecture explains why gen AI feels powerful and also why it often feels unpredictable. It’s brilliant at responding to inputs, but it treats most inputs as statistically equivalent.

And that’s where its Achilles’ heel hides.

“Traditional interpretations of gen AI assume Generative AI stepped in to tackle that gap. By offloading bureaucratic and largely mundane workload to smart agents, it freed human talent to focus on creativity and domain expertise. For a moment, it felt like a panacea. They overlook that real-world use is defined by wildly different goals, mental models, constraints, and decision-making styles. True, modern gen AI systems are sophisticated enough to handle diversity. The problem is, they still do so from a generalized point of view. Technology thrives on diversity only when it’s explicitly programmed for it. Now imagine the upgrade when diversity becomes the system-level priority.”

{{Kirill Lazarev}}

Where does this insight take us next?

AI transformation isn’t just tech — it’s a strategic discipline of redesigning how work operations, decisions, and products function once intelligence takes the lead.

Everything holds together until the idea of conceptual diversity enters the scene. What do we mean by it?

Different users don’t just want different outputs. They operate with unique mental models, incentives, and definitions of what a “good” interaction looks like.

Treating user differences as prompt variations is a leaky shortcut.

Which brings us to the next step in the evolution of generative AI. We call it the user-generated use case framework, a shift from “one model, many prompts” to diversity-first systems.

How does the future of gen AI look? Unfolding a user generated use case concept

Approaching generative AI as industry-specific rather than user-centered creates a predictable set of problems. And they compound fast.

When gen AI is designed around static industry assumptions, a few structural limitations surface:

- Limited scalability due to weak self-learning across real, evolving workflows.

- Misalignment with diverse user needs, where differences are reduced to prompt tweaks rather than structural variation.

- Overgeneralized outputs that sound right but miss the mark operationally.

- Context decay, where insights from one use case don’t meaningfully improve the next.

Here’s our take on the current trajectory of gen AI:

💡If a system accommodates everything, it succeeds at nothing.

Once a system tries to serve every abstract scenario equally, it sacrifices precision. And without precision, intelligence loses its differentiating power.

So what’s the alternative?

Introducing the user-generated use case framework

At first glance, this idea sounds counterintuitive:

💡Gen AI must be vague enough to scale and structured enough to stay useful.

But that tension is exactly the point.

Vagueness here is on par with accommodating variety. It doesn’t mean randomness. Rather, it delineated designed openness: the ability to absorb variation without collapsing into generic output.

This is where the user-generated use case framework comes in.

Instead of defining what gen AI should do per industry, the framework allows users to define what the system becomes per use case and lets the system learn from each one.

How a user generated use case framework works

Imagine you’re running a multi-million-dollar ad campaign for a SaaS company rolling out a major product upgrade.

Campaign brief:

- Product description: A feature-dense platform update targeting enterprise, mid-market, and power users.

- Campaign objective: Drive adoption, reduce churn risk, and reposition the product’s value narrative.

- Diversity of use cases:

- CMOs need strategic narratives and KPI decks.

- Product marketers need launch messaging and feature comparisons.

- Sales teams need tailored pitch assets per vertical.

- Designers need UI references and visual direction.

- Growth teams need experiment briefs and performance hypotheses.

Traditional gen AI tools treat all of this as one task with many prompts. The result is inconsistent output that struggles to serve multiple target audiences around the same product.

The solution: an AI agent built on a user-generated use case framework.

Instead of being locked into predefined industry scenarios, the agent operates as industry-agnostic by default and becomes specialized through use. Each completed use case feeds structured context back into the system. This strategic loop helps refine future outputs without resetting intelligence every time.

The value proposition: Under this model, business teams can create any deliverable, tool, or even subsidiary agent internally. This eliminates the need for external design, coding, or AI expertise.

Product structure: The agent is modular. Depending on your project goals, it might feature such variables or modules as:

- Target audiences

- KPIs and performance decks

- Brand and market references

- UI kits and design systems

- Code snippets and logic blocks

Each module evolves as users generate new use cases.

Why does this solution seem the most probable trajectory for generative AI?

Well, safe to say it changes the direction from building smarter models to focusing on smarter structures underlying those models. Gen AI should behave as systems capable of learning from intent. That doesn’t flatten diversity. Instead, it operationalizes it.

Be the first to recognize the gen AI shift and leverage it

Generative AI has reached an inflection point.

Scaling gen AI through broader generalization leads to diminishing returns. Precision doesn’t come from adding more prompts or piling on features. It comes from systems designed to learn from how people work.

That’s exactly where the user generated use case framework changes the game.

At Lazarev.agency, an AI UX design agency, we work at the intersection of gen AI, UX strategy, and real-world product systems. Our outcomes prove that we move beyond generic AI adoption toward adaptive, diversity-first intelligence.

If you’re exploring how this framework could apply to your product, workflows, or internal tools, let’s talk. The next phase of generative AI will be won by those who structure it best.

.webp)