Marketing qualified lead (MQL) to sales qualified lead (SQL) conversion reflects how well marketing and sales teams interpret buying intent. When this metric plateaus or, worse, goes downhill, operational misalignment is almost always among the chief culprits.

Vague conversion data, unclear documentation, delayed follow-ups, or misread intent signals all compromise how well (or whether at all) your business lands qualified opportunities for conversion.

In this article, we set MQLs apart from SQLs, discuss what disrupts their transition, and how to design a sustainable system that moves qualified leads forward with consistency and intent.

Key takeaways

- MQL→SQL is a decision system. Conversion improves when the lead qualification process, marketing messages, handoff, and stages across the sales funnel operate as a single system anchored in lead behavior.

- Intent data, timing, and ownership matter more than lead count. Most breakdowns come from weak intent signals or ambiguous ownership during the MQL to SQL handoff.

- A lead scoring system is effective as long as it triggers action. A lead scoring model must be applicable. If thresholds don’t change lead behavior, they don’t improve conversion.

- Hesitation reveals where design and messaging fail. Pauses at pricing, shallow product usage, and stalled follow-ups point to experience gaps that a solid design strategy can directly address.

What’s the difference between MQLs vs. SQLs?

MQLs and SQLs mark different decision points in the revenue funnel. Confusing the two or collapsing them into a single stage creates false confidence in the product's performance and detrimental usage barriers.

An MQL signals interest worth monitoring, whereas an SQL indicates readiness for a sales conversation.

“When MQLs are treated as ready-to-buy prospects, sales effort is wasted on leads who aren’t yet ready to commit. When SQL criteria are vague, marketing efforts are being optimized without a clear target. Both scenarios sabotage growth for the same reason: missing context.”

{{Kirill Lazarev}}

We’ve compared the two lead types against 7 decision-defining criteria so you can see exactly where interest transitions into readiness.

🔍 For a deeper understanding of the funnel stage, revisit the anatomy of a conversion funnel.

What hinders the MQL to SQL transition?

When MQL to SQL conversion goes amiss, it usually points to structural issues in how teams qualify and respond to demand.

Below are the most common factors that prevent MQLs from progressing into SQLs, and how they show up in real revenue operations.

1. Poor communication between marketing and sales

Misalignment between marketing and sales is the most persistent blocker in the MQL to SQL transition. When teams operate with different definitions of “qualified”, handoffs become subjective and easy to dismiss.

This misalignment manifests as:

- Marketing optimizing for engagement metrics that sales doesn’t consider as buying intent

- Sales rejects leads without a documented rationale

- Ambiguous ownership during the handoff window, where no team feels accountable

🟩 What’s needed: Shared qualification criteria and explicit ownership rules. ➡️ An MQL should convert to an SQL when marketing and sales agree on the transition specs. Plus, never treat SQL rejections as dead ends. When reviewed systematically, they indicate how accurately the system models buyer readiness and where qualification logic needs to evolve.

2. Weak lead qualification

Many lead scoring models don’t dig deep enough to detect true buying intent. Leads accumulate points for content engagement or task completion. That’s how high scores often indicate curiosity rather than readiness to buy.

This shows up when:

- High-scoring leads stall after initial contact

- Product usage or behavioral depth is ignored

- All MQLs receive the same follow-up urgency

🟩 What’s needed: Intent-weighted signals. ➡️ Strong indicators include repeated evaluation behaviors, deep and sustained product interaction, pricing exploration, and explicit comparisons between solutions.

3. Slow response times

Interest evaporates quickly. Even well-qualified MQLs cool off when follow-up gets trapped in confusing internal approvals. The longer the gap between intent and response, the harder it is to restart the conversation.

Common patterns:

- Leads waiting hours or days for first contact

- Sales responses arriving after the peak of intent has already passed

- Automation triggering is too late to be useful

🟩 What’s needed: Optimized response time. ➡️ Automated alerts for high-intent actions and predefined follow-up paths help ensure leads are engaged while their interest and intent are at their highest.

4. Ineffective lead nurturing

Generic nurturing assumes all leads have the same questions. In reality, MQLs and near-SQLs face very different challenges and require guidance tailored to their stage in the buying journey.

🔍 This is a common side effect of skipping foundational user understanding, a risk we explore in detail in our article on the risks of skipping UX research.

This issue surfaces when:

- Content doesn’t reflect the decision stage

- Messaging repeats information the lead already knows

- Personal context is ignored across channels

🟩 What’s needed: Flexible lead nurturing. ➡️ Early-stage leads need clarity, while late-stage leads need proof.

5. Measurement and learning gaps

Many teams track MQL to SQL conversion as a success metric, but don’t analyze why leads fail to convert. SQL rejection is often treated as a loss instead of a diagnostic signal.

Consequences:

- Repeating the same targeting mistakes cycle after cycle

- Optimizing for conversion rate without assessing deal quality

- No visibility into systemic qualification issues

🟩 What’s needed: Structured feedback loops. ➡️ Every rejected SQL should feed insights into scoring logic and targeting assumptions. Without this learning layer, conversion rates fluctuate without achieving sustainable growth.

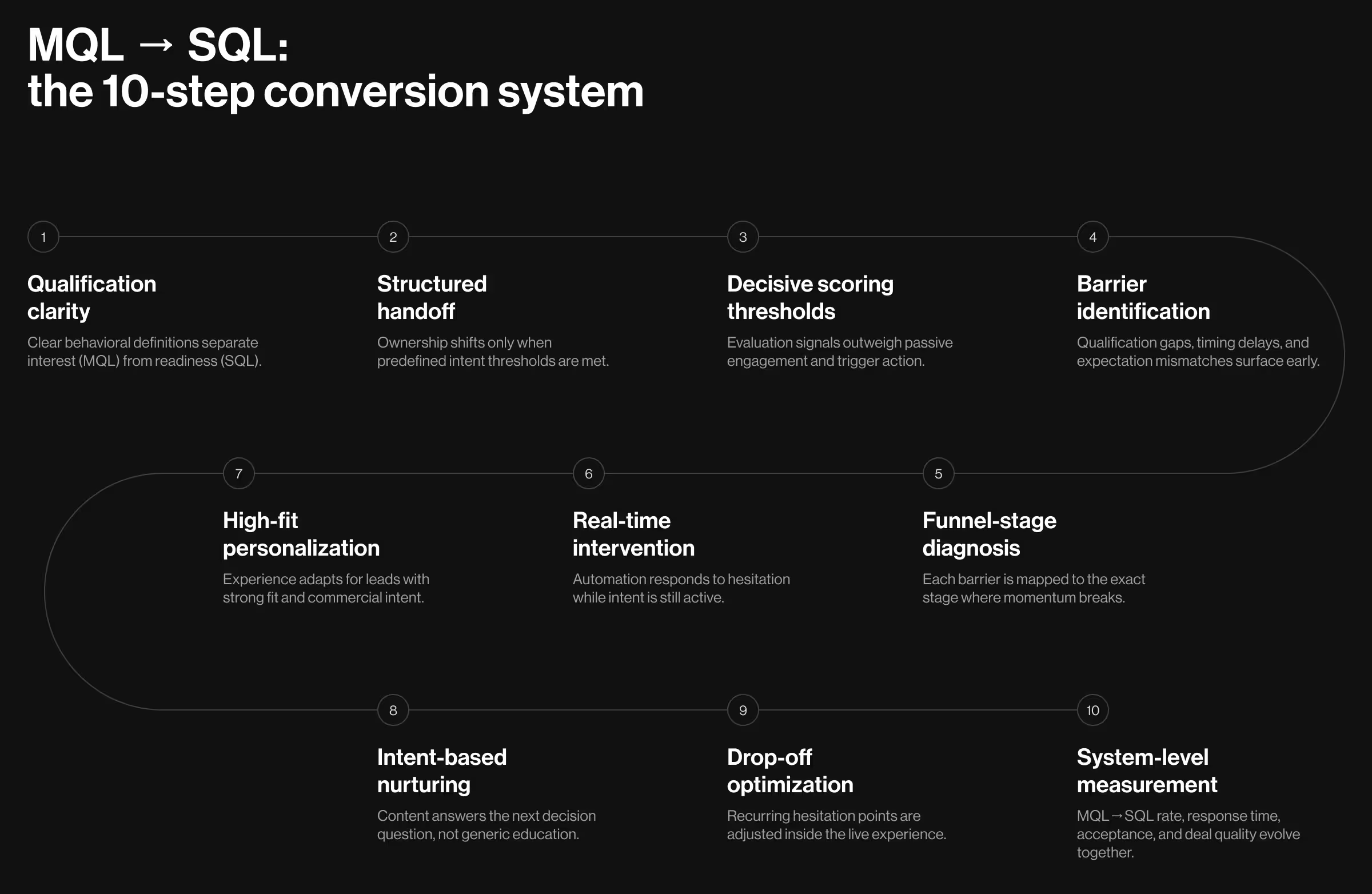

How to move a lead from MQL to SQL

Improving MQL to SQL conversion requires a structural approach.

The strategies below focus on establishing that system-level logic by clarifying qualification criteria, aligning ownership, addressing user hesitation, and refining the path from early interest to sales readiness.

1. Start with MQL and SQL qualification criteria

Clear qualification criteria are the backbone of predictable revenue. They define when a lead deserves attention and when it deserves a sales conversation.

MQLs should demonstrate sustained interest, repeated engagement with problem- or solution-focused content, and basic contextual fit like role, company size, or industry.

By contrast, SQLs should show explicit evaluation signals (e.g., pricing exploration or trial depth) and alignment with your sales motion and deal scope.

✅ Implementation checklist:

- Review the last 20 closed-won deals and isolate shared pre-SQL behaviors

- Remove engagement signals that appear equally in closed-lost deals

- Separate interest confirmation (MQL) from sales readiness confirmation (SQL)

- Document both definitions in a shared system used by marketing and sales

2. Formalize the MQL to SQL handoff

The handoff between marketing and sales is a decision checkpoint. When ownership shifts without shared criteria or sufficient context, leads stall, and sales ends up re-qualifying work that should already be settled.

Start by clarifying ownership:

- Marketing owns the lead until predefined intent and fit conditions are met

- Sales takes over once readiness signals are confirmed

Next, standardize the handoff itself:

- A documented handoff rule agreed on by marketing and sales

- A trigger tied to observable behavior, e.g., pricing exploration, workflow-level feature usage, or demo intent

- Clear response expectations, with follow-up timing tracked reliably

🔦 Practical insight: Treat SQL rejection as system feedback. When sales sends leads back, capture the single dominant reason behind it. Track patterns. In the long run, this mindset helps close the gap between what marketing forwards and what sales accepts.

3. Design a lead scoring model with specific thresholds

A lead score should answer one question: should sales act now? If it only ranks interest, it leaves sales guessing.

Start by separating evaluation behavior from awareness:

- Evaluation signals: pricing page depth, demo requests, product usage milestones, repeat visits to solution-specific pages

- Contextual signals: blog reads, generic downloads, newsletter engagement

Evaluation signals carry decisive weight. Contextual engagement exists to inform judgment. Inflated scores from passive activity give sales a false sense of urgency.

Scores should map directly to action:

- Below threshold → continue targeted nurturing

- At threshold → sales review or controlled outreach

- Above threshold → immediate sales engagement

🔦 Practical insight: Avoid flexible ranges. Ambiguity delays response and weakens trust in the system.

4. Identify key barriers to MQL to SQL conversion

Combine sales feedback with funnel behavior to spot repeatable patterns — a short UX design sprint is one of the fastest ways to surface the dominant ones.

The most common barriers fall into 3 categories:

- Qualification gaps: Leads meet scoring thresholds, but collapse in early sales conversations.

▶️ Diagnose by comparing MQL criteria across closed-won and rejected SQLs. Look for signals present in wins but missing in stalled leads. - Timing issues: High-intent actions followed by delayed follow-up or sudden disengagement.

▶️ Diagnose by tracking the time between key intent signals and first sales contact, then reviewing drop-off patterns tied to delays. - Mismatched expectations: Leads engage actively but hesitate when scope, pricing, or process enters the conversation.

▶️ Diagnose by reviewing pre-SQL content and messaging against live sales discussions. Misalignment here surfaces quickly.

Barrier identification must entail an actionable response. Operationalize the analysis:

- Require sales to tag every SQL rejection with a single dominant reason

- Review rejection patterns monthly

- Anchor each barrier to the exact funnel stage where momentum slows

- Prioritize barriers that recur across channels and segments

5. Map the identified barriers onto the sales conversion funnel

Barriers become solvable when tied to the exact moment a lead hesitates. Mapping them to the funnel shows where to act and where not to waste effort:

6. Use automation to respond to lead hesitation in real time

Hesitation shows up as repeated behaviors signaling uncertainty. Left unanswered, intent weakens before sales can engage.

Automation works best when it intervenes early to stabilize interest before hesitation hardens into disengagement. Conversational UI design is invaluable here for delivering guidance and reassurance on the spot.

Key hesitation patterns and best responses:

- Pricing exploration without inquiry – Users doubt product value.

⚡ Act: Use chatbots or interactive guides to clarify pricing tiers, logic, and typical use cases in context. - Repeated feature comparison – Users can’t gauge relevance or differentiation.

⚡ Act: Leverage conversational UI to provide tailored, in-flow guidance linking features to observed behavior or industry context. - Workflow questions – Users struggle to envision daily use.

⚡ Act: Deliver short walkthroughs or examples tied to the tasks the user attempts to complete. - Customer care spikes – Users worry about reliability.

⚡ Act: Offer immediate reassurance with clear next steps, escalation options, or visibility into support availability.

🔍 Find out whether your business is ready for chatbot digital transformation.

7. Personalize the experience for high-value leads

Embed personalization deep into your UX strategy. Identify leads with clear fit and commercial intent using signals you already have, like pricing exploration or deep trial engagement.

Apply personalization without overreach:

- Use short conversational prompts to confirm intent

- Surface answers where hesitation happens, such as pricing or feature comparison

- Keep interactions brief, optional, and easy to ignore

8. Nurture leads with targeted content

Effective nurture streams are built around intent. Content should answer the specific questions a lead is likely weighing based on their readiness to engage with sales.

✅ Implementation guidance:

- Map each piece of content to a single decision question

- Suppress introductory material once SQL signals appear

- Sequence content based on observed behavior

- Audit nurture streams regularly to remove assets that don’t support progression

🔍 For a deeper perspective on aligning content with user behavior and system design, see our guide on product roadmap and design system audit.

9. Identify drop-off triggers and optimize accordingly

Drop-offs follow repeatable patterns. The goal here is to spot where intent weakens and adjust the experience exactly at that point.

Start by reviewing user behavior around key decision moments. Look for recurring pauses or inactivity windows that separate leads who progress from those who stall.

Common drop-off triggers:

- After pricing exposure: Signals unclear scope, value alignment, or plan differentiation. Add contextual pricing explanations and clarify what changes at each tier.

- During product explanation: Suggests feature relevance or workflow fit isn’t obvious. Simplify explanations and tie features directly to the lead’s primary use case.

- After initial outreach: Indicates uncertainty about next steps or timing. Clearly explain what happens next and why engagement now matters.

✅ How to act on drop-off insights:

- Track inactivity windows after key trial actions

- Compare the behavior between leads that convert and those that stall

- Focus on triggers that appear consistently

- Adjust messaging or guidance within the existing experience

10. Measure conversion rates and iterate

Conversion metrics shall clearly explain why leads progress or hesitate to move forward. The objective here is to monitor the handoff as a system of metrics.

- MQL to SQL rate: Indicates whether qualification criteria reflect real buying intent. Review when the rate shifts suddenly, it often signals scoring or targeting drift.

- SQL acceptance rate: Shows how often sales agrees with marketing’s qualification. Declines point to misaligned definitions or premature escalation.

- Time to first sales contact: Reflects how quickly intent is acted on. Delays here often explain lost momentum more clearly than lead quality.

- Deal quality after conversion: Confirms whether SQLs progress into meaningful opportunities. Weak deal quality suggests upstream misclassification.

✅ How to apply these insights:

- Review metrics together to understand how they interact

- Compare trends month over month to spot structural shifts

- Tie the metric movement to specific process changes

- Adjust the above-mentioned strategies based on patterns observed

Secure MQL to SQL conversion with deliberate design solutions

MQL to SQL conversion goes up when intent is understood, and signals are interpreted in context. The strongest pipelines are built by teams that treat this transition as a shared decision point informed by behavior and fit.

Design plays a central role here. It shapes how value is understood, how intent is expressed, and how confidently leads move toward a sales conversation. If you’re evaluating where your MQL→SQL transition breaks down and how experience design can correct it, reach out for an expert talk-through.

.webp)